Some fun data-mining of StreetEYE headlines

Belated end-of-year roundup.

Well, congrats to the Patriots and all my Boston homies. That was a catch and a comeback for the ages. Huger than Joe Montana back in the 80s, maybe GOAT. Y’all still should still learn to talk and to drive like normal human beings, but enjoy a legendary victory.

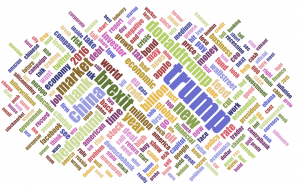

So, last year I did a word cloud of most common terms in 2015 StreetEYE headlines. Somehow I never got around to it around New Years this year. So here it is! (click to embiggen)

2016 StreetEYE Headline Word Cloud

Interesting to compare … ‘Trump’ was yuge, and ‘Brexit’ was the other big one. ‘Greece’ was big in 2015, and faded like

OK, while we’re clearing up unfinished business, here are the top clicked stories of 2016.

1. Economics on Buying vs Renting a House

2. A hedge fund has laid out why it is closing — and it is enough to set alarm bells ringing everywhere

3. Goldman Sachs Says It May Be Forced to Fundamentally Question How Capitalism Is Working

4. Trader exposes sexist horrors of the Wall Street ‘frat house’

5. FANG Is So 2015… BARF In 2016

6. With a single vote, England just screwed us all

7. Let Me Remind You Fuckers Who I Am, by @shitHRCcantsay

8. My very peculiar and speculative theory of why the GOP has not stopped Donald Trump

9. How Does This Hedge-Fund Manager Make So Much Money

10. Are US Stocks Overvalued?

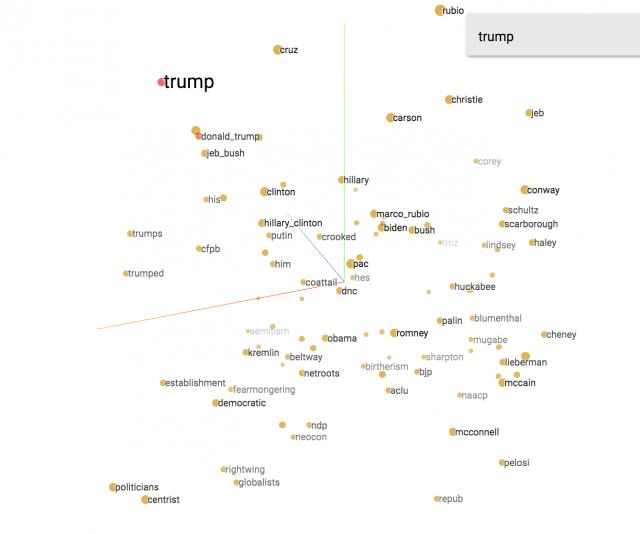

Finally, if you’re REALLY into mad science…here’s a semantic analysis of > 1,000,000 headlines on StreetEYE (not just front page, everything that was shared by anyone on social media that we follow…this app does the analysis in your browser, so it will take a minute to download the data, and needs an up-to-date computer).

In the top right search, type a term, like ‘Trump’, then click on a completion, then click on ‘Isolate 101 data points’, and you’ll see something like the below (click to embiggen):

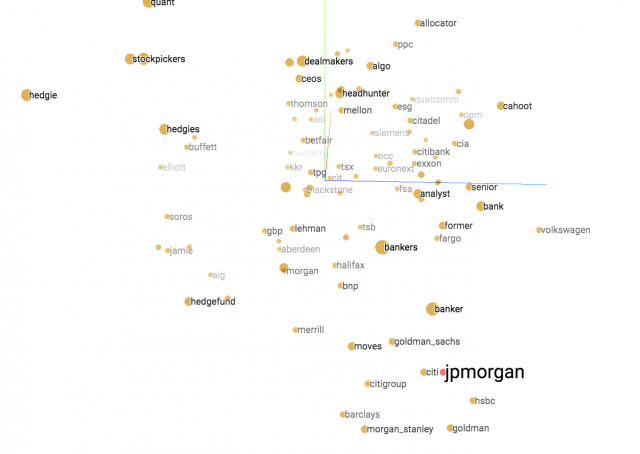

To try another search, choose “Show all data”, type in something new like “jpmorgan”, click on the completion, and you’ll see one like this.

What is the point? This is a way for computers to infer meaning of text based on context. Possibly it gives insight into how humans do it. A good representation of meaning can let us cluster related stories together. It can be used as an input to predict which stories will go viral, and improve the relevance and timeliness of headlines, or for other purposes like machine translation.

For the code that was used to generate the visualization inputs, see here.